Scott Liewehr, Global VP of Market Strategy and Growth at Sitecore, wrote a piece for CMSWire in April that’s really worth reading. His argument is solid:

AI doesn’t kill brand authenticity – lazy use of AI does. Brand voice was already broken before AI arrived.

He’s right.

A properly governed AI is a consistency engine, not a threat. The brands that figure this out first will have an advantage that’s genuinely hard to replicate.

But there’s a layer underneath that argument that doesn’t get discussed. The philosophical case for AI and authenticity is one thing. The infrastructure that makes it actually work is another.

This post is about the infrastructure. Part 2 is about what that could look like in practice inside Sitecore.

The problem with thinking about content as documents

Most brand governance thinking operates at the document level.

Does this article sound like us?

Does this campaign feel on-brand?

Is this email consistent with our tone of voice guidelines?

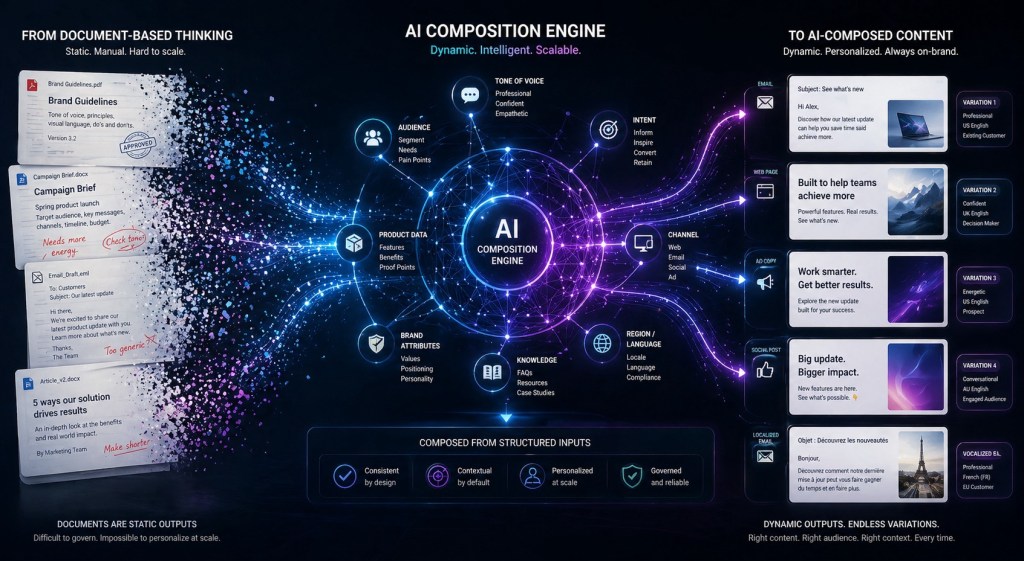

That’s the wrong unit of analysis for how AI generates content. AI doesn’t write documents.

It writes blocks.

- A headline.

- A product description.

- A CTA.

- A social variant.

- An email subject line.

Each one generated independently, assembled into something that looks like a document at the end.

If your governance is applied at the document level but your generation is happening at the block level, you have a gap. The review happens too late, at too high a level of abstraction, and catches problems after the fact rather than preventing them by design.

The fix is to decompose your content into blocks first – and apply authenticity governance at the block level.

What a content block actually is

A block is the smallest meaningful unit of content that carries brand intent. It can be structural or channel-specific – and most organisations will need both dimensions.

- Structural blocks – the components that make up a piece of content regardless of channel: headline, body copy, product claim, CTA, legal disclaimer, supporting statistic.

- Channel blocks – the same content adapted for different surfaces: web page variant, email variant, social short-form, paid ad copy, print adaptation.

A single campaign might have eight structural block types across five channels.

That’s forty governed units of content before you’ve written a word. The authenticity question isn’t

does this campaign sound like us?

It’s

does each of these forty blocks independently carry the right voice, tone, and brand attributes for its context?

That’s a governance architecture problem. Not a copywriting problem.

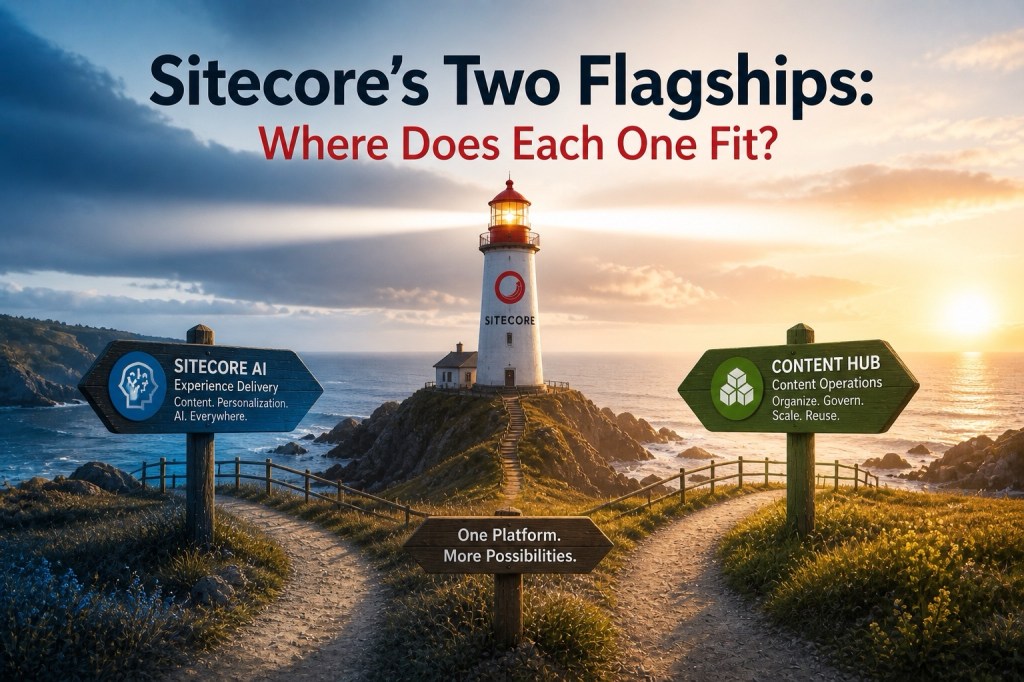

Where Content Hub fits

Content Hub’s entity model is what makes block-level governance possible at scale.

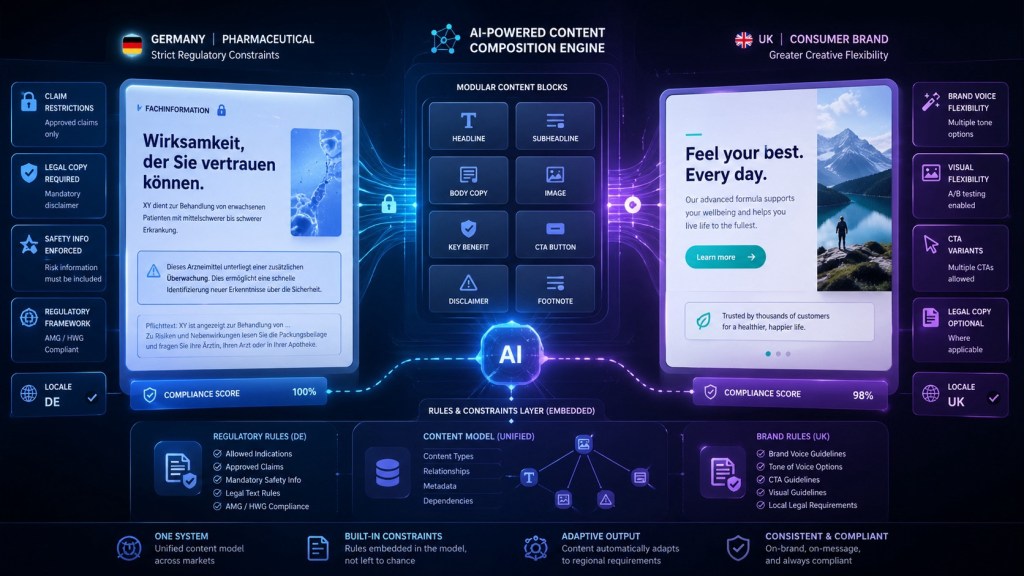

Each block type becomes an entity with its own taxonomy – brand attributes, tone markers, channel constraints, approval state, rights information.

A headline block for a pharmaceutical product in the German market carries different constraints to a headline block for a consumer brand in the UK. Those constraints are modelled into the entity, not left to the discretion of whoever is generating the content that day.

The DAM provides the asset governance layer for visual blocks – approved imagery, licensed photography, brand-compliant graphics – all tagged and rights-managed at the same granularity as the copy blocks.

The CMP layer is where the campaign structure comes together – a campaign entity that references approved blocks, tracks which variants exist, and manages the editorial workflow from brief to approval to distribution.

This applies whether you’re running Content Hub standalone or as part of the broader SitecoreAI ecosystem. The block library is platform-agnostic – it feeds whatever delivery layer you’re using.

Where SitecoreAI’s Agentic Studio takes it further

If Content Hub provides the governed block library, Agentic Studio is where AI puts it to work.

An agent is configured to generate content by selecting from approved block types, applying brand voice constraints defined in the taxonomy, and assembling variants for different channels – all within guardrails that make off-brand content structurally difficult to produce rather than just discouraged by policy.

This is governance at authoring time, not just approval time.

At authoring time the agent knows which block types are available, what constraints apply to each, and what the brand voice parameters are for this campaign, this market, this channel. It doesn’t start from a blank page – it starts from a constrained solution space where most of the authenticity work is already done by the architecture.

At approval time a human checkpoint validates that the assembled blocks meet the intent of the brief before they enter the approved library. The review is faster because it’s checking against defined parameters rather than making subjective judgements about whether something feels right.

Authenticity stops being a review activity and becomes a design constraint. You don’t check if the content is on-brand at the end. You build the system so off-brand content is hard to create in the first place.

This is what Scott’s argument actually requires

The conductor analogy in Scott’s piece is the right one. AI as a conductor that never forgets the score.

But a conductor needs a score. And the score needs to be written at the right level of granularity.

A brand voice document is not a score. It’s a set of principles. Principles don’t prevent drift at scale – they describe the intention behind a system that hasn’t been built yet.

The score is a governed block library. Taxonomy that defines what each block type is permitted to say and how it’s permitted to say it. Approval workflows that validate blocks before they enter production. Agents that generate within those constraints rather than from scratch.

That’s the infrastructure argument. The philosophy is right. The question is whether the organisation has done the hard work of translating that philosophy into a content architecture that AI can actually operate within.

Most haven’t. Which is why most AI content still sounds like LinkedIn.

The practical starting point

You don’t have to build the full block library before you start.

A useful first step is to audit your last three months of content and identify the ten block types that appear most frequently and cause the most inconsistency.

Those ten block types are your first governed entities. Define the constraints. Build the taxonomy. Create the approval workflow. Feed them into the system.

Then measure whether the content produced from those governed blocks is more consistent than what came before.

It will be.

And that proof point is what gets the organisation to invest in the broader architecture.

Leave a Reply