My first blog made the case for block-level content governance as the infrastructure underneath Scott Liewehr’s argument about AI and authenticity. If you haven’t read it, start there.

This post is about governance specifically – what it means for AI-generated content, why it’s no longer optional, and what it could look like inside Sitecore Content Hub if the platform extended its entity model in this direction.

To be clear upfront: what you’re about to see is a concept. These are not existing Content Hub features.

This is how I think the platform could extend what it already does well to meet a regulatory and operational need that’s arriving fast.

Why governance is no longer optional

Most organisations treat AI content governance as an internal quality problem.

- Does the output sound right?

- Is it on-brand?

- Did a human check it?

That framing is already out of date.

The EU AI Act came into full effect in 2025. Article 52 introduces specific transparency obligations for AI systems that interact with people – including content that users might reasonably assume was written by a human.

If your AI is generating campaign copy, product descriptions, or any customer-facing content, you are operating in regulated territory whether you’ve acknowledged it or not.

The obligations are not vague.

Organisations must:

- Disclose when content has been AI-generated in contexts where a person could be misled

- Maintain records of AI system use sufficient to demonstrate compliance

- Ensure human oversight is in place for higher-risk AI outputs

- Apply appropriate governance to systems that could cause harm through deception

A brand voice document and a content approval workflow is not a governance framework. It’s a quality process.

Governance means documented rulesets, immutable audit trails, disclosure mechanisms, and human review gates that are enforced by the system – not left to individual judgment.

Content Hub’s entity model and workflow engine is, in my view, exactly the right foundation to build that on. Here’s what it could look like.

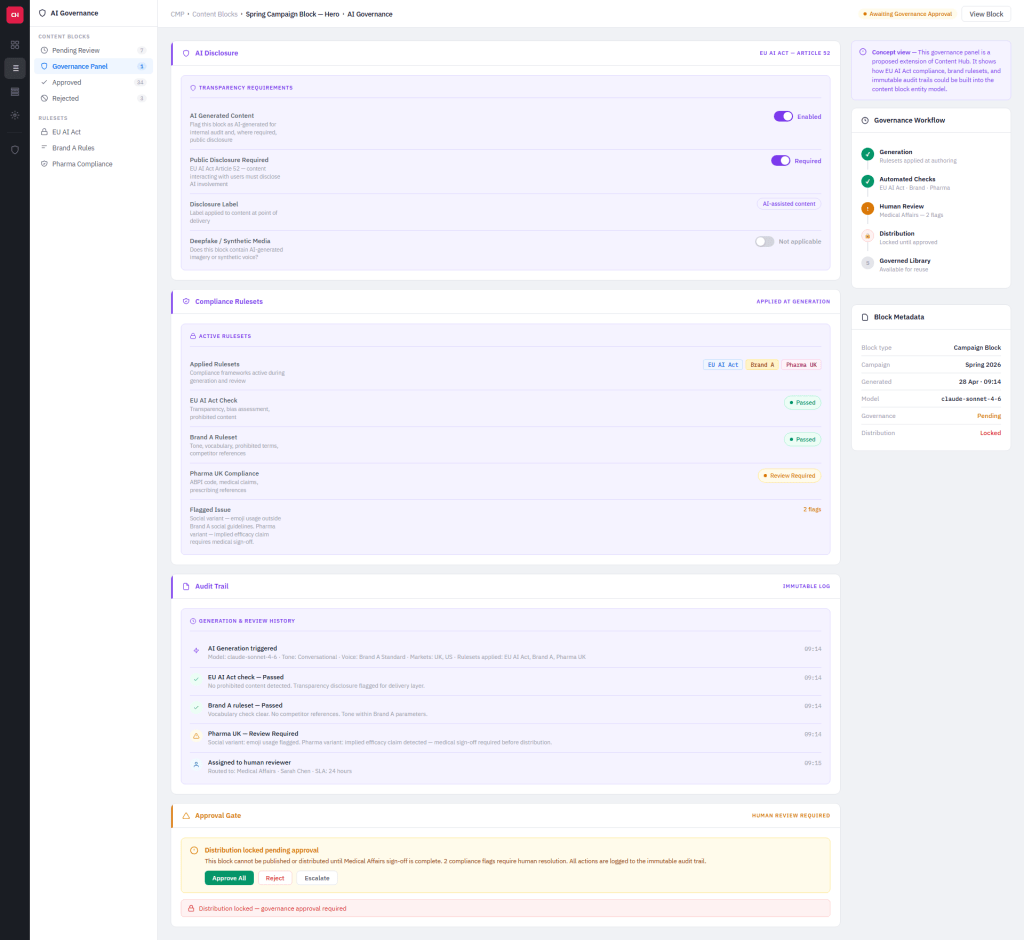

The governance panel

This is a concept governance panel sitting on a campaign block entity in Content Hub.

It’s not a separate system, it’s an extension of the entity model.

Everything is attached to the block, logged against it, and enforced through it.

Four sections. Each one addresses a distinct governance requirement.

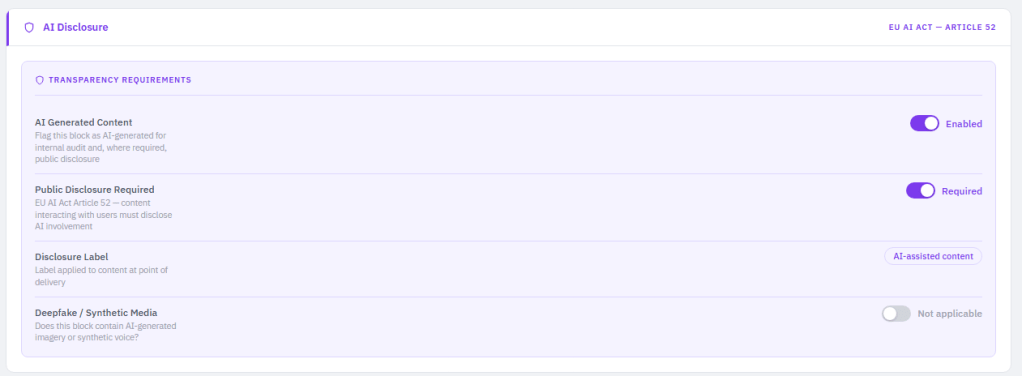

AI Disclosure

The first section addresses the EU AI Act’s transparency requirements directly.

An AI-generated flag is applied to the block at generation time – not as a note in a spreadsheet, but as a property of the entity itself.

That flag travels with the block wherever it goes. When the block is approved and enters the governed library, its disclosure state is part of its permanent record.

Public disclosure is a separate toggle – because not every context requires customer-facing disclosure, but the system needs to know which ones do.

A campaign block used in a product comparison context carries different obligations than a brand awareness post.

The entity knows which channels it’s tagged for, and the disclosure requirement is configured at the ruleset level rather than decided case by case.

Where disclosure is required, a label is applied at delivery. In this concept it’s “AI-assisted content” – configurable against the brand and regulatory ruleset rather than hardcoded.

The synthetic media flag handles a separate EU AI Act obligation. Deepfake and AI-generated imagery carry specific disclosure requirements. Toggled off here because this block is copy-only – but for a block that includes AI-generated images, the flag would trigger an additional disclosure requirement at delivery.

The point of this section is that disclosure becomes a property of the content, not a manual step someone has to remember to take.

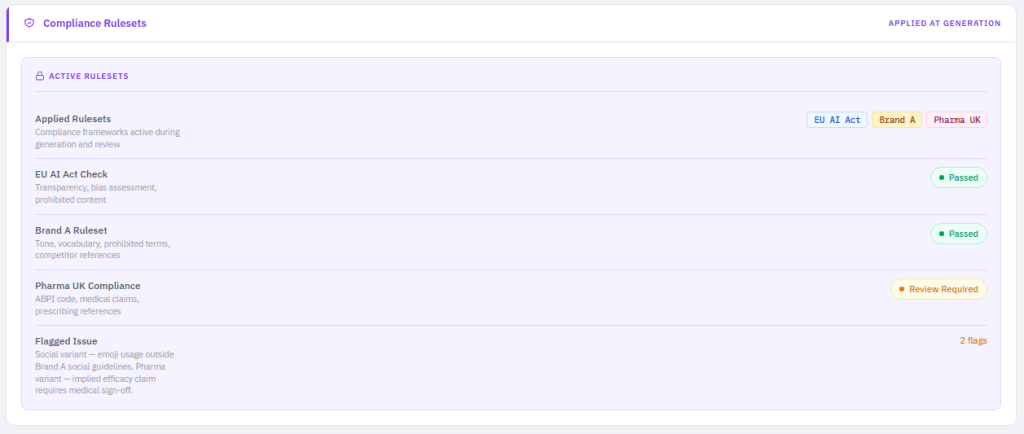

Compliance Rulesets

This is where governance constraints are applied and checked.

Three rulesets are active on this block:

- EU AI Act

- Brand A

- Pharma UK.

They aren’t selected manually for each block – they’re inherited from the entity’s taxonomy. The block is tagged Brand A, tagged for pharmaceutical markets, tagged for UK and US.

The system applies the relevant rulesets automatically.

Each ruleset runs its check at generation time and surfaces a clear result:

- EU AI Act – checks for prohibited content categories, bias indicators, and transparency requirements. Passed.

- Brand A – checks tone against the brand voice taxonomy, flags prohibited vocabulary, checks for competitor references. Passed.

- Pharma UK – checks against ABPI code requirements, medical claims, and implied efficacy language. Review required.

That last result is the important one. The system doesn’t block generation because the pharma check flags a potential issue.

It generates, flags the specific problem, an implied efficacy claim in one variant and routes it to the appropriate reviewer. Medical Affairs, with a 24-hour SLA.

This is the distinction between governance and gatekeeping. The system doesn’t stop work. It catches issues at the right point in the workflow and routes them to the right people rather than letting them reach publication.

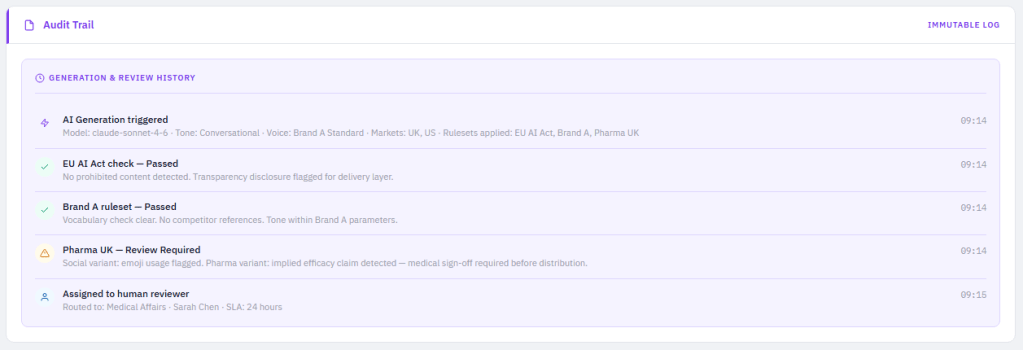

Audit Trail

This section is perhaps the most important from a regulatory standpoint – and the least glamorous.

Every action on the block is logged immutably.

- The model used to generate it. The parameters applied.

- Which rulesets ran and what they found.

- When the block was assigned for review and to whom.

This isn’t optional under the EU AI Act.

Organisations need to be able to demonstrate that appropriate governance was in place. A content history sitting in a CMS revision log is not that demonstration.

An immutable log attached to the entity, generated at the time of each action, not reconstructed later.

Look at what the audit trail captures in this concept:

- AI generation triggered – model version, tone, voice constraint, markets, rulesets applied

- EU AI Act check – result and detail

- Brand A ruleset – result and detail

- Pharma UK – flag raised, specific issue noted

- Assigned to human reviewer – who, which team, SLA

Every entry is timestamped. Every entry is structured data. If a regulator asks how this content was produced and what oversight was in place, the answer is in the entity record – not in someone’s email history.

Content Hub’s existing audit infrastructure is already close to this.

The extension is making it AI-aware – capturing generation parameters and ruleset outcomes as structured entity data rather than just a timestamp and a username.

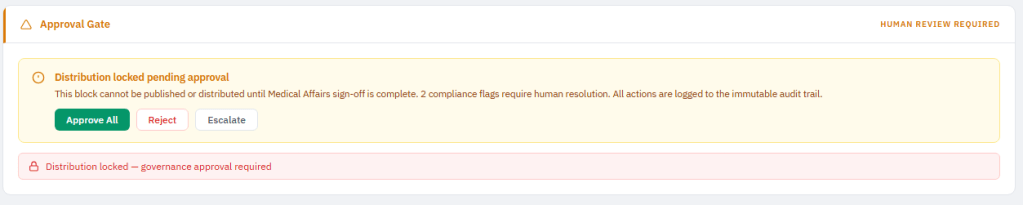

Approval Gate

Governance without enforcement is a policy document. The approval gate is the enforcement mechanism.

The block cannot be published or distributed until the flagged issues are resolved and a qualified reviewer has signed off. The distribution lock is applied automatically when compliance flags are outstanding. It is lifted automatically when the required approvals are complete.

The approver has three options:

- Approve all – the reviewer is satisfied that the flagged issues have been addressed or are acceptable in context. The block proceeds.

- Reject – the block is returned for revision. The rejection reason is logged to the audit trail.

- Escalate – routes to a higher authority without the content reviewer having to make a call they’re not qualified to make.

In a pharma context, that might be regulatory affairs or legal. In a financial services context, it might be compliance.

The escalation path is configurable.

All of those decisions are logged. The audit trail is updated with every action. The block either enters the governed library as an approved and compliant asset – or it doesn’t move.

That last point matters. The distribution lock isn’t a reminder. It’s a hard stop. Content that hasn’t cleared governance cannot reach a channel.

He is an example of what you could end up with in a json format.

{

“entity”: {

“id”: “CB-29401”,

“entityType”: “EPAM.ContentBlock”,

“schemaVersion”: “1.4.0”,

“properties”: {

“title”: “Spring Campaign Block — Hero”,

“blockType”: “heroCopy”,

“slug”: “spring-campaign-hero-2025”,

“locale”: “en-GB”,

“markets”: [“UK”, “DE”, “FR”],

“campaign”: {

“id”: “CAMP-1042”,

“name”: “Spring 2025”

},

“createdAt”: “2025-05-02T09:14:00Z”,

“createdBy”: {

“id”: “USR-001”,

“username”: “system.ai”,

“displayName”: “AI Orchestrator”

}

},

“content”: {

“headline”: “Rediscover what spring feels like.”,

“body”: “Results in days — not weeks. Our formula is clinically tested and dermatologist approved for sensitive skin types.”,

“callToAction”: {

“label”: “Shop the range”,

“url”: “/spring-2025”

}

},

“aiDisclosure”: {

“aiGeneratedContent”: true,

“publicDisclosureRequired”: true,

“deepfakeSyntheticMedia”: false,

“disclosureLabel”: {

“taxonomyId”: “EPAM.DisclosureLabel.AIAssisted”,

“label”: “AI-assisted content”

},

“generationMetadata”: {

“model”: “claude-sonnet-4-6”,

“modelVersion”: “20250514”,

“prompt”: {

“tone”: “professional”,

“voiceConstraint”: “Brand A”,

“wordLimit”: 80,

“targetAudience”: “adults 30-55”,

“instructionSetId”: “INSTR-Brand-A-Spring-2025”

},

“generatedAt”: “2025-05-02T09:14:00Z”,

“tokenCount”: {

“input”: 312,

“output”: 87

}

},

“regulatoryBasis”: {

“regulation”: “EU AI Act”,

“articleReference”: “Article 52”,

“complianceVersion”: “2024-08”,

“requiresDisclosureOnPublish”: true

}

},

“compliance”: {

“appliedRulesets”: [

{

“rulesetId”: “RS-EU-AI-ACT”,

“name”: “EU AI Act”,

“version”: “2024-08”,

“status”: “passed”,

“checkedAt”: “2025-05-02T09:15:00Z”,

“flags”: []

},

{

“rulesetId”: “RS-BRAND-A”,

“name”: “Brand A”,

“version”: “3.1”,

“status”: “passed”,

“checkedAt”: “2025-05-02T09:15:00Z”,

“flags”: []

},

{

“rulesetId”: “RS-PHARMA-UK”,

“name”: “Pharma UK”,

“version”: “2.0”,

“status”: “reviewRequired”,

“checkedAt”: “2025-05-02T09:16:00Z”,

“flags”: [

{

“flagId”: “FLAG-001”,

“severity”: “medium”,

“ruleCode”: “PHARMA-UK-7.2”,

“ruleName”: “Informal language near health claim”,

“detail”: “Emoji characters detected adjacent to a health efficacy statement.”,

“contentRef”: “body”,

“requiresSignOff”: true,

“signOffTeam”: “Medical Affairs”

},

{

“flagId”: “FLAG-002”,

“severity”: “high”,

“ruleCode”: “PHARMA-UK-12.4”,

“ruleName”: “Implied efficacy claim”,

“detail”: “‘Results in days’ is an unqualified efficacy claim. Requires medical sign-off before distribution.”,

“contentRef”: “content.body”,

“requiresSignOff”: true,

“signOffTeam”: “Medical Affairs”

}

]

}

],

“overallStatus”: “reviewRequired”,

“flagCount”: 2,

“lastCheckedAt”: “2025-05-02T09:16:00Z”

},

“governance”: {

“approvalStatus”: “awaitingReview”,

“distributionLocked”: true,

“lockReason”: “Unresolved compliance flags require Medical Affairs sign-off”,

“assignedReviewer”: {

“id”: “USR-412”,

“username”: “sarah.chen”,

“displayName”: “Sarah Chen”,

“team”: “Medical Affairs”

},

“sla”: {

“durationHours”: 24,

“assignedAt”: “2025-05-02T09:17:00Z”,

“expiresAt”: “2025-05-03T09:17:00Z”

},

“approvalActions”: []

},

“auditTrail”: [

{

“entryId”: “AUD-001”,

“event”: “aiGenerationTriggered”,

“timestamp”: “2025-05-02T09:14:00Z”,

“actor”: “system.ai”,

“detail”: {

“model”: “claude-sonnet-4-6”,

“tone”: “professional”,

“voiceConstraint”: “Brand A”,

“markets”: [“UK”, “DE”, “FR”],

“rulesetsApplied”: [“EU AI Act”, “Brand A”, “Pharma UK”]

}

},

{

“entryId”: “AUD-002”,

“event”: “rulesetCheckPassed”,

“timestamp”: “2025-05-02T09:15:00Z”,

“actor”: “system.compliance”,

“detail”: {

“ruleset”: “EU AI Act”,

“result”: “passed”,

“flags”: []

}

},

{

“entryId”: “AUD-003”,

“event”: “rulesetCheckPassed”,

“timestamp”: “2025-05-02T09:15:00Z”,

“actor”: “system.compliance”,

“detail”: {

“ruleset”: “Brand A”,

“result”: “passed”,

“flags”: []

}

},

{

“entryId”: “AUD-004”,

“event”: “rulesetCheckFailed”,

“timestamp”: “2025-05-02T09:16:00Z”,

“actor”: “system.compliance”,

“detail”: {

“ruleset”: “Pharma UK”,

“result”: “reviewRequired”,

“flags”: [“FLAG-001”, “FLAG-002”]

}

},

{

“entryId”: “AUD-005”,

“event”: “reviewerAssigned”,

“timestamp”: “2025-05-02T09:17:00Z”,

“actor”: “system.governance”,

“detail”: {

“assignedTo”: “sarah.chen”,

“team”: “Medical Affairs”,

“slaHours”: 24,

“reason”: “Pharma UK flags require human sign-off”

}

}

]

}

}

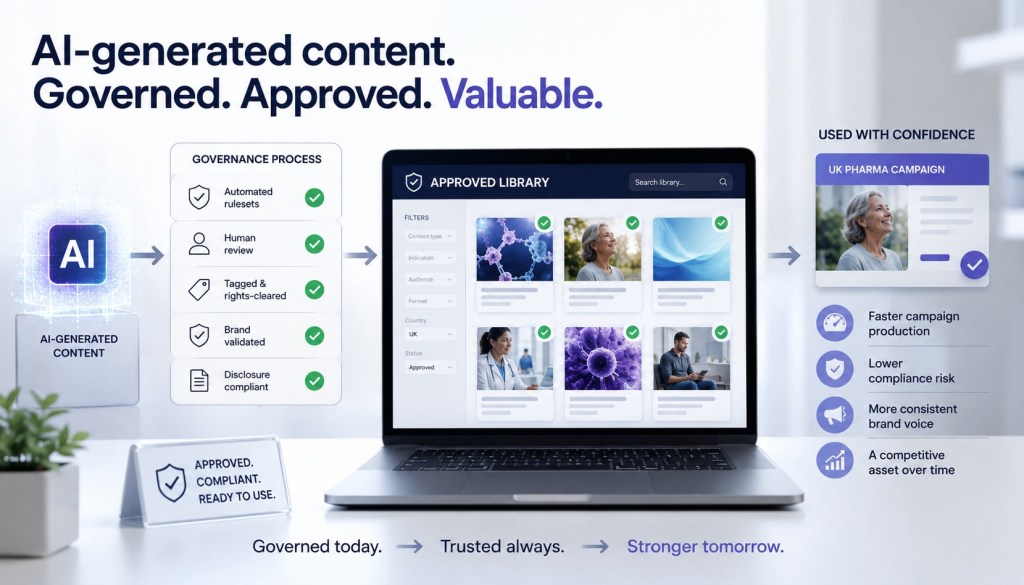

Why this matters beyond compliance

The regulatory case is real and it’s here. But the operational argument is equally strong.

Every block that passes through this governance process and enters the approved library is genuinely valuable.

It’s been checked against automated rulesets and reviewed by a qualified human. It’s tagged, rights-cleared, brand-validated, and disclosure-compliant.

The next campaign that needs a UK pharma-approved hero block can draw from the library rather than starting from scratch.

Over time the library becomes a competitive asset – faster campaign production, lower compliance risk, more consistent brand voice.

That’s the compounding value of governance done properly. It’s not just risk mitigation. It’s operational efficiency built on a foundation of compliance.

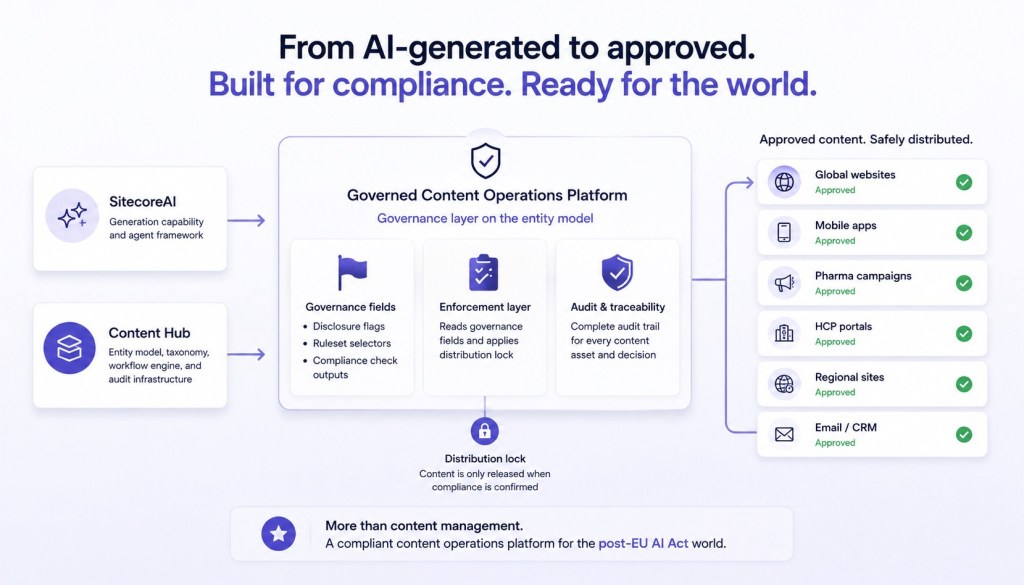

What Sitecore would need to build

Content Hub already has the entity model, the taxonomy, the workflow engine, and the audit infrastructure.

SitecoreAI already has the generation capability and the agent framework.

The gap is a set of governance-specific field types on the entity model – disclosure flags, ruleset selectors, compliance check outputs – and an enforcement layer that reads those fields to apply the distribution lock.

That’s a meaningful but tractable build. And it would make Content Hub not just a content operations platform but a compliant content operations platform.

In a post-EU AI Act world, that’s a meaningfully different set of products.

Leave a Reply